This is the final part of the Hacking CI/CD Pipelines series and our journey comes to an end with Azure Pipelines. So far we have looked at:

Azure DevOps is a comprehensive suite of development tools (including Boards, Repos, Test Plans), but Azure Pipelines is the specific CI/CD component we are targeting. It’s widely used in enterprise environments due to it being included within the Azure subscription and its native integration with other Microsoft services.

Setup #

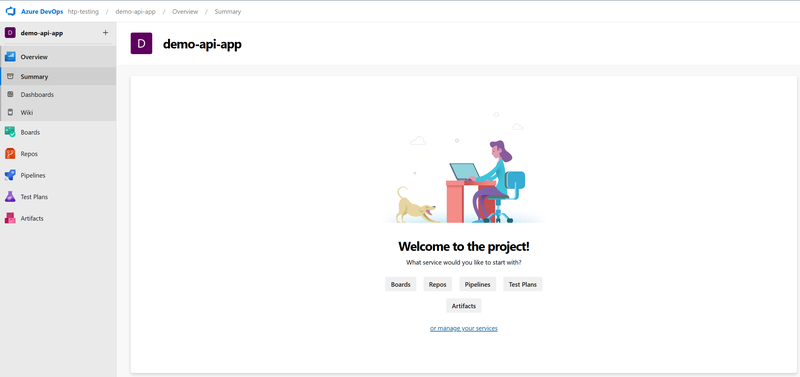

To get started, we need to set up an Azure DevOps organization and project to host the pipeline and connect to the GitHub repository with the demo-api-app.

Repository Connection #

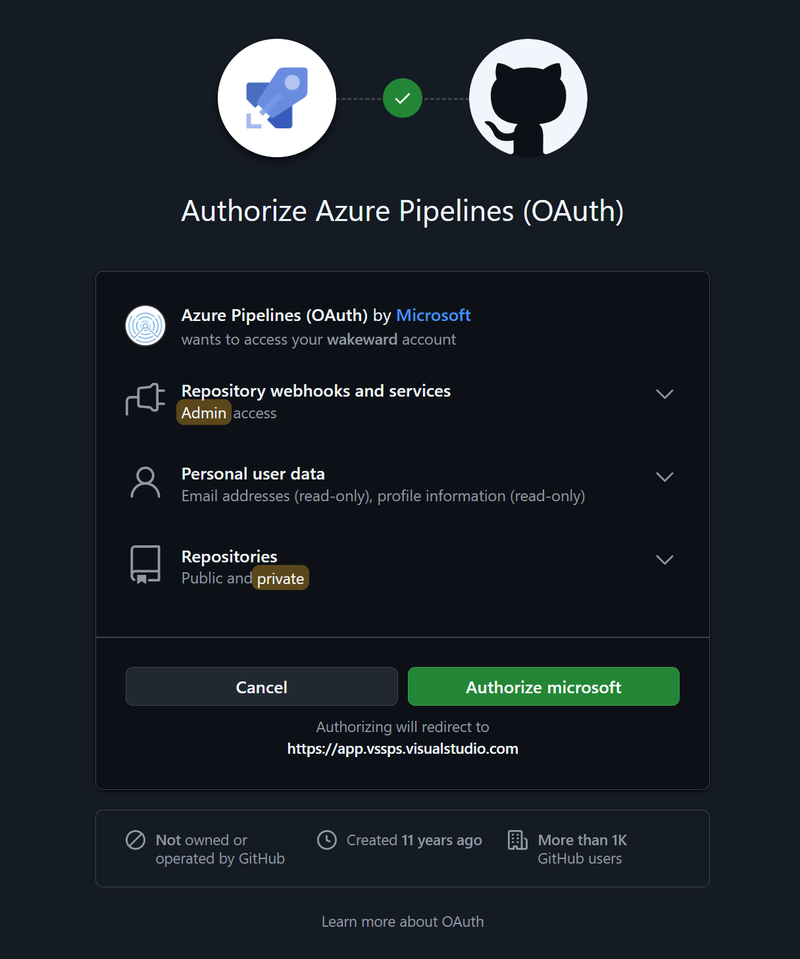

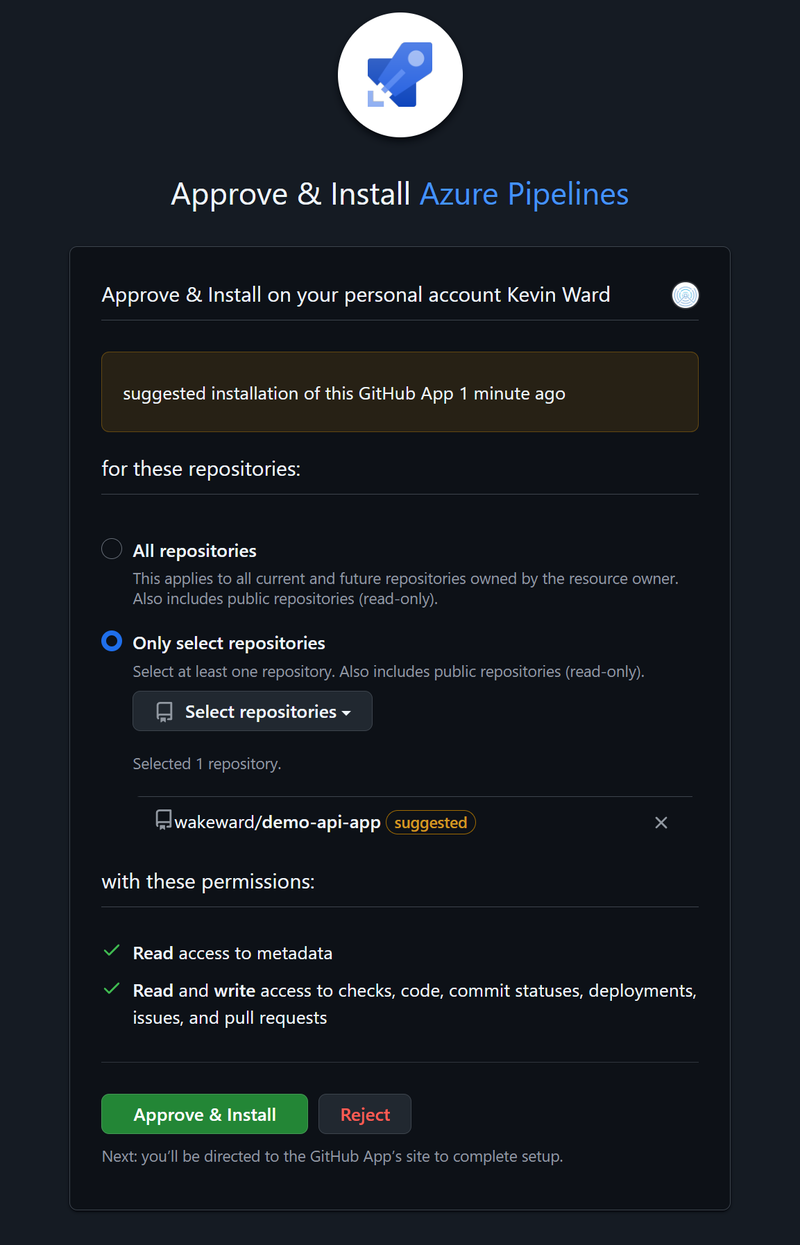

To connect our external GitHub repository demo-api-app to the Azure DevOps project, firstly navigate to Pipelines in the left sidebar and click New Pipeline and under “Where is your code?”, select GitHub. You will be redirected to GitHub to sign in and authorize Azure Pipelines.

The permissions initially seemed to be a little heavy, but you can proceed to select a specific repository from a list.

Once connected, you will be prompted to configure your pipeline. Since we are creating a new one, you can select the Starter pipeline (which gives you a basic YAML file)

|

|

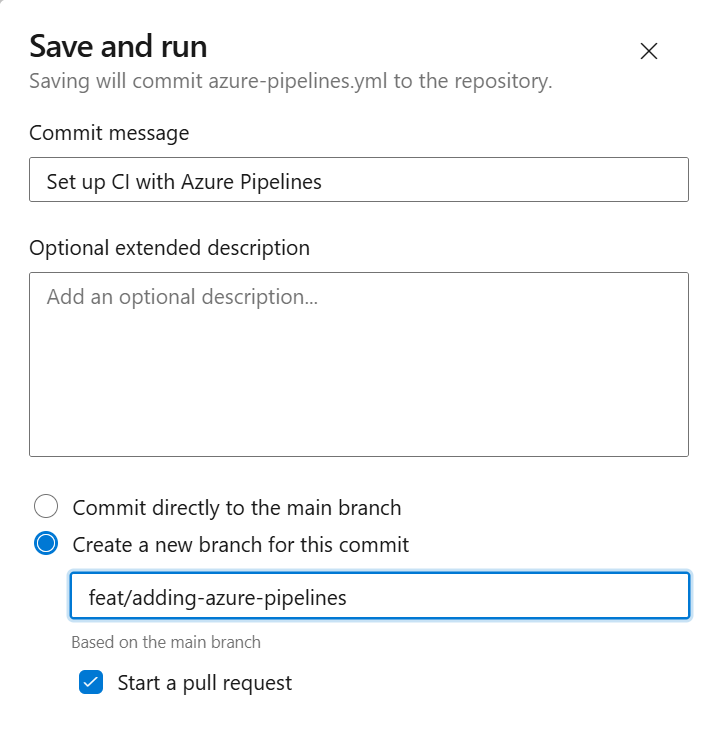

Finish off by saving and running the “Hello, world!” pipeline, which we’ll create a new branch for.

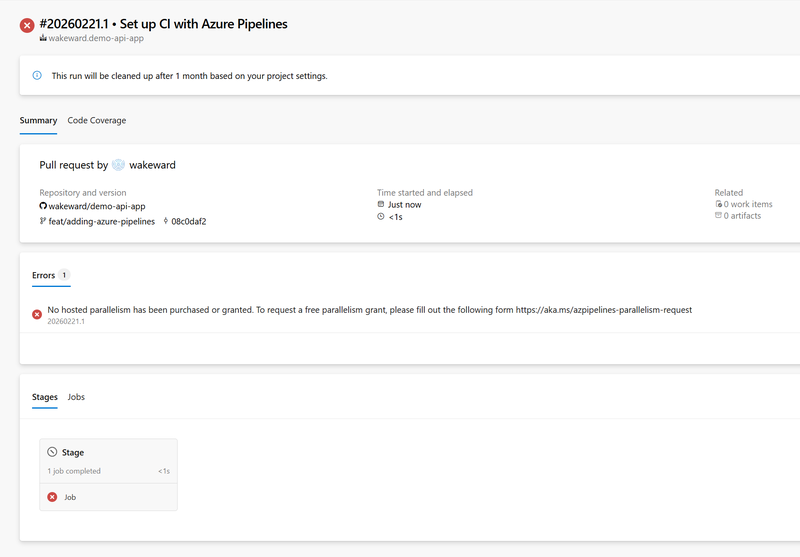

The build returns an error (this is what I get for using a free account 😂)

Apparently to run the basic example, you need to add a “parallelism request”, for hello, world!… 🤷

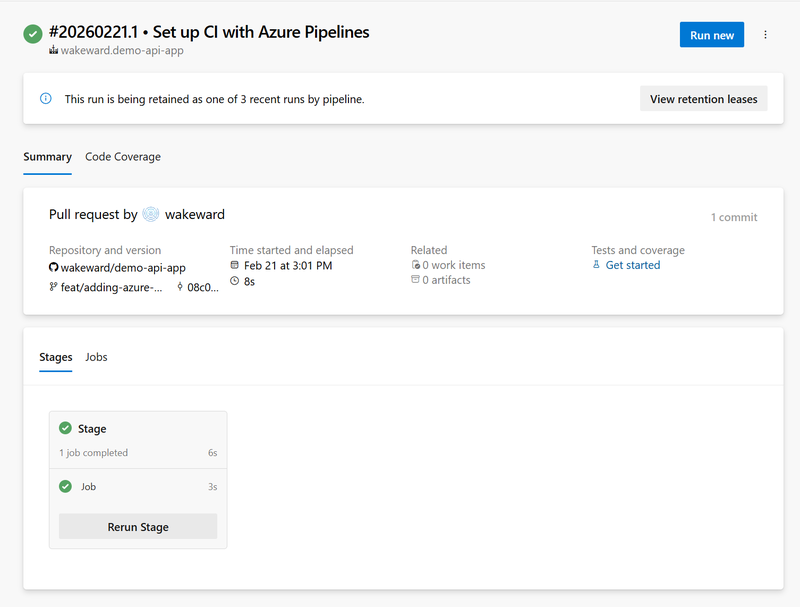

After sending a request and waiting for parallelism to be enabled, we find that re-running the example is a success.

Pipeline Definition #

Azure Pipelines uses YAML definitions, typically named azure-pipelines.yml. Just like our previous examples, we’ll setup two steps:

- Build and Test: Compiles the Go application and runs tests.

- Docker Build and Push: Builds the container image and pushes it to DockerHub.

We will also need to think about the runner itself and what we want to use. The primary options available are Linux (Ubuntu), Windows and macOS so we’ll stick with a Linux-based runner which is Microsoft-hosted agents (vmImage: 'ubuntu-latest').

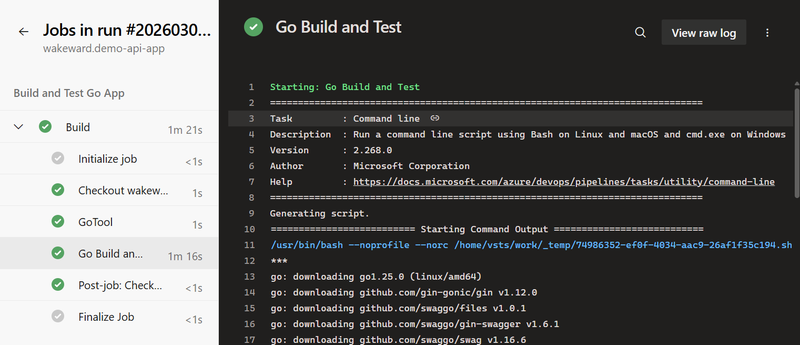

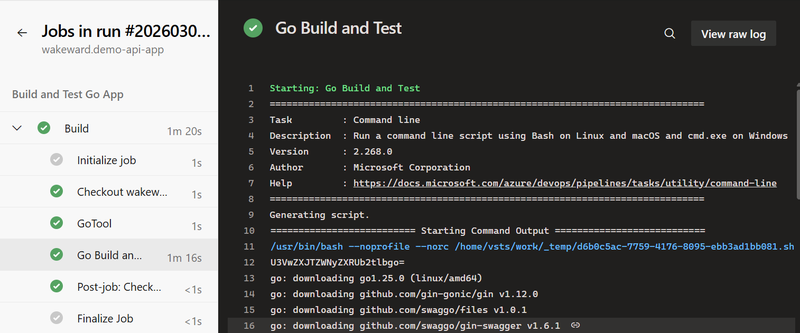

For the first stage, Microsoft provides several examples of how to build different languages. For Go, we can specify the version we want to use and run the standard commands.

Go Build and Test

|

|

For the second stage, we can use the Docker@2 task to build and push the container image.

Docker Build and Push

|

|

Bringing it all together, we have the complete pipeline definition.

Full Pipeline

|

|

Pipeline Secrets and Connectivity #

Before we run, the pipeline we need to setup our secrets for DockerHub and the custom secret we want to exfiltrate later. For access to DockerHub, Azure pipelines handles this slightly differently than the other pipelines we setup by using service connections. Service connections abstract credentials for external services which can be restricted to specific pipelines and have approval checks.

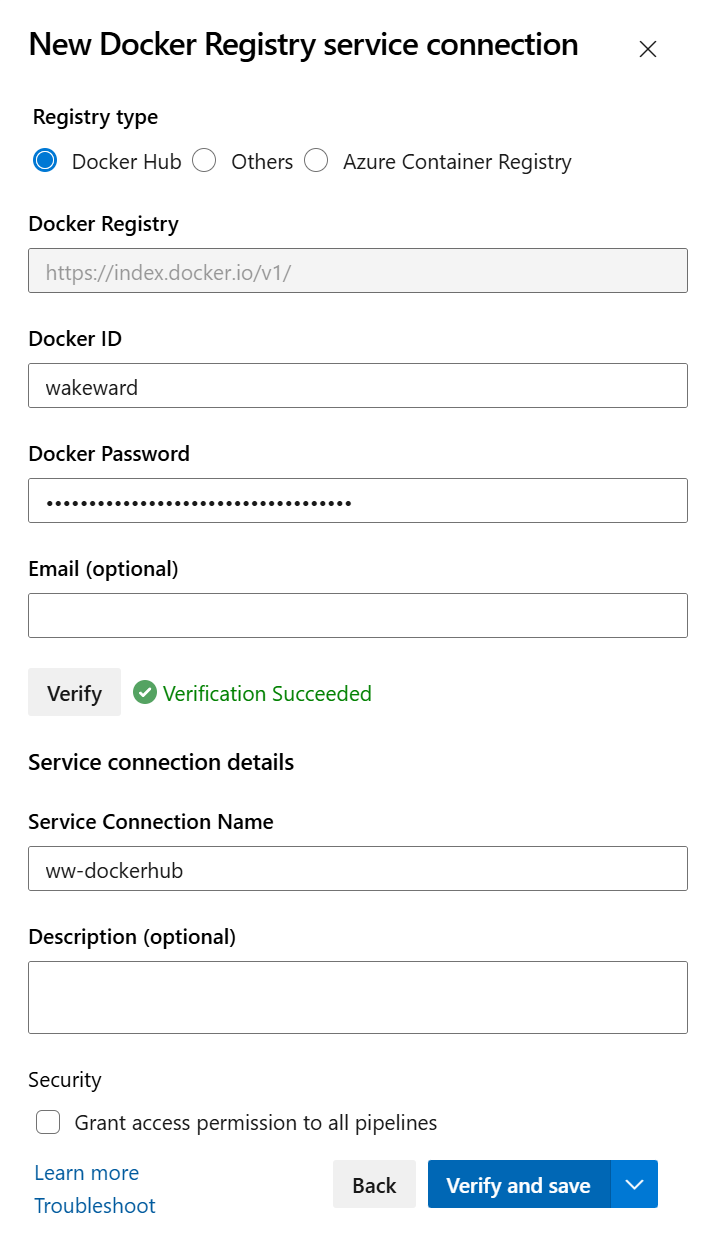

To connect our DockerHub account to Azure DevOps, navigate to Project settings > Service connections > New service connection and select Docker Registry.

- Select Docker Hub.

- Enter your Docker ID and Password (or Access Token).

- Name the connection

DockerHubConnection. - Click Verify and save.

The service connection is then referenced in our pipeline definition file as a variable.

For our custom secret we want to exfiltrate later, we will use a Variable Group.

- Navigate to Pipelines > Library.

- Click + Variable group.

- Name it

demo-api-secrets. - Add a variable named

SecretTokenwith the valueSuperSecretToken. - Click the padlock icon to make it a secret (this masks the value in logs).

- Save the group.

Variables can be linked to Azure Key Vault for additional security.

To use this variable group in our pipeline, we need to reference it in the pipeline definition file.

|

|

This makes the variables available to the pipeline, but there is a catch which we will discuss later.

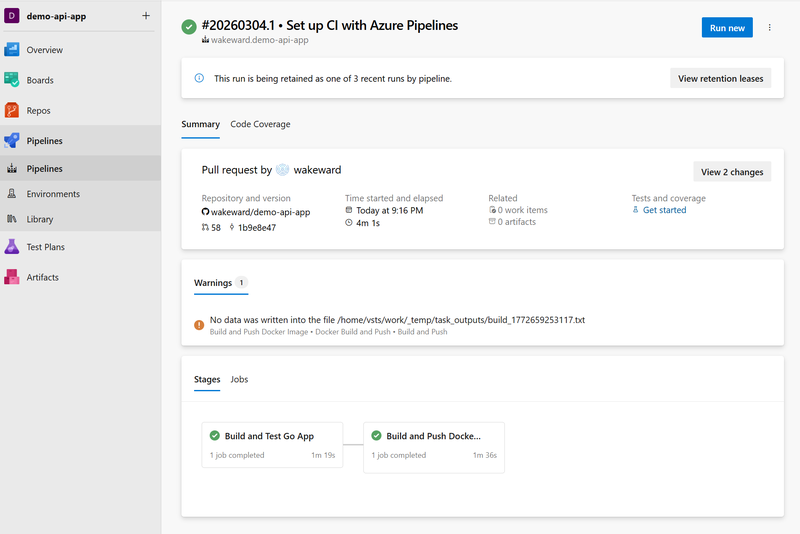

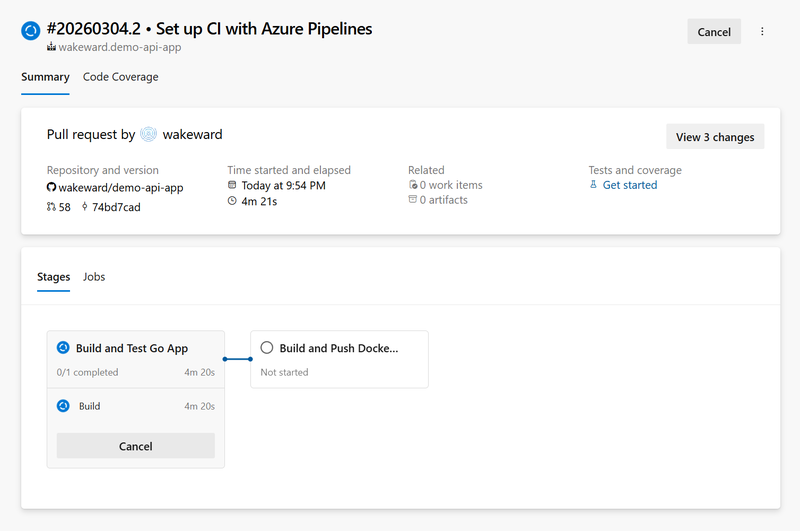

With all this configured, we can commit the changes to the azure-pipelines.yml and trigger the pipeline.

Success! 🏆

Validating the Build #

With the container image built, we can check it by pulling it from DockerHub and testing it out.

|

|

|

|

|

|

With our pipeline successfully building the app and container image. We can move onto exploitation.

Exploitation: Remote Code Execution #

Let’s start with obtaining remote code execution. We’ve seen a scripted section within our pipeline definition file which we can modify to perform a reverse shell.

|

|

We add this in before building our go binary and commit our changes in the demo-api-app repository. If successful our pipeline will hang whilst we obtain a reverse shell.

Enumerating the Agent #

We receive a reverse shell:

|

|

Performing a env returns 164 environment variables! I won’t list them all but we can see that our service connection is listed and doesn’t leak the DockerHub PAT. Additionally, the secret we created (from the variable group) is not included by default. The remaining environment variables listed are essentially runner metadata, system and tool paths.

|

|

Running id reveals that the user is part of the docker group:

|

|

Reviewing sudo permissions and linux capabilities confirms the runner is running effectively as root.

|

|

|

|

No significant mount points were discovered.

|

|

One interesting finding was that the build log contained no information on the executed reverse shell. There was information about the go build but the scripted command was not outputted. The only signal was a longer build time.

Next up, let’s look at stealing those credentials which have so far eluded us.

Exploitation: Stealing Credentials #

Previously we defined a variable group for our custom secret but even if we declare that in our pipeline definition file, Azure DevOps does NOT automatically inject secret variables into the environment. The variable will be empty unless you explicitly map it in the YAML:

|

|

Although this is a strong security default, in our scenario we have access to the pipeline definition file so if we have an idea that a secret exists, we can always declare it. This does assume prior knowledge of the secret or we can attempt to declare several environment variables in hope of exfiltration (like spraying well known or common variables).

This may seem speculative but if you are seeing code which is being built and shipped to package manager, many leverage access tokens and developers tend to name these in a predictable format (e.g. GO_PACKAGE_ACCESS_KEY).

Exfiltration via Logs #

Let’s start by trying to dump the credentials in the build log. The build stage can be edited with following to declare the secret variable.

|

|

As previously discussed, we need to ensure the group variable is defined under the variables section:

|

|

The log output shows that the secret value has been masked with a ***.

Let’s use the base64 encode trick to see if that will work.

|

|

Decoding the base64 string we obtain our secret.

|

|

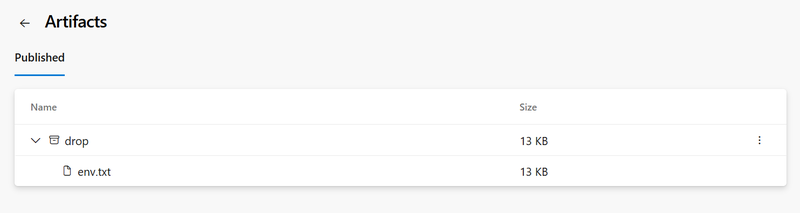

Exfiltration via Artifacts #

Before we wrap up there is another method we can use to exfiltrate secrets. Much like CircleCI, we can write the environment/secrets to a file and publish it as a build artifact. Rather than include our build and push stages, we’ll focus on this technique. The changes look like this within the pipeline definition file:

|

|

Reviewing the pipeline run, we see that artefact is published and can see the env.txt.

Downloading the file we see a dump of all the environment variables including:

|

|

Wrap Up #

And that’s it. For this post we’ve reviewed how Azure DevOps Pipelines work, how it handles common integrations with service connections which reduce the risk of secret exposure. For custom secrets, we’ve seen you need to explicitly map a secret to an environment variable and it has basic, accidental leakage protection in the build log.

For me, the most significant finding is the reverse connection was not shown in the build log. This means that anyone auditing it would have no idea that it’s happened other than a history trail in git or potentially SecOps detecting an outbound call to an unauthorised endpoint. In my experience, these types of activities and behaviours are not audited so I can see them easily slipping past security.

Series Conclusion #

We’ve covered 5 major CI/CD platforms, each sharing similar issues with slight variances. So what would be my advice trying to protect your pipelines.

Key Takeaways:

- The Pipeline is Production: Treat your pipeline as an extension of production. In the age of the ephemerial artefact promotion, having access to CI/CD is very powerful. Ensure you protect our pipeline definition file and review the changes via a protected branch (e.g. PR reviews).

- Endpoint Detection and Response: Based on the evolving threats and how much development environments are being targeted, trying and apply some level of anomaly detection will help. The latest wave of attacks aren’t even dropping tailored scripts, rather instructions for AI agent to be coerced into doing malicious actions. Even with this, irregular outbound calls can be intercepted and blocked for security to review.

- Handling Secrets: This is a prime target (along with cryptowallets) for adversaries. Use short-lived credentials whereever possible (e.g. OIDC). For secrets that cannot be dynamic, assume compromise and increase the frequency for rotation. If anything it will test your response procedures in rotating secrets and blocking any compromised packages from further distribution.

If you’ve made it this far, thank you and hope you found it insightful and a useful reference point. Now TTL.